Introduction and background

Oracle database offers numerous migration alternatives, particularly for 12c and later releases. Databases within a given environment serve different workload types with varying SLAs. The selected migration approach should evaluate the requirements of each database (or set of databases) separately to determine the optimal method for that specific environment. Articles in this Migration series provide a practical framework for Oracle database migration. It demonstrated each method requirement and limitations, shedding light on common mistakes and challenges, based on real-life migration projects.

The current article addresses the planning of the migration project with brief reference to migration methods, focusing on planning and project management; subsequent migration articles are more technical in nature, outlining capabilities and restrictions of each method, putting higher focus on implementation.

Requirements definition

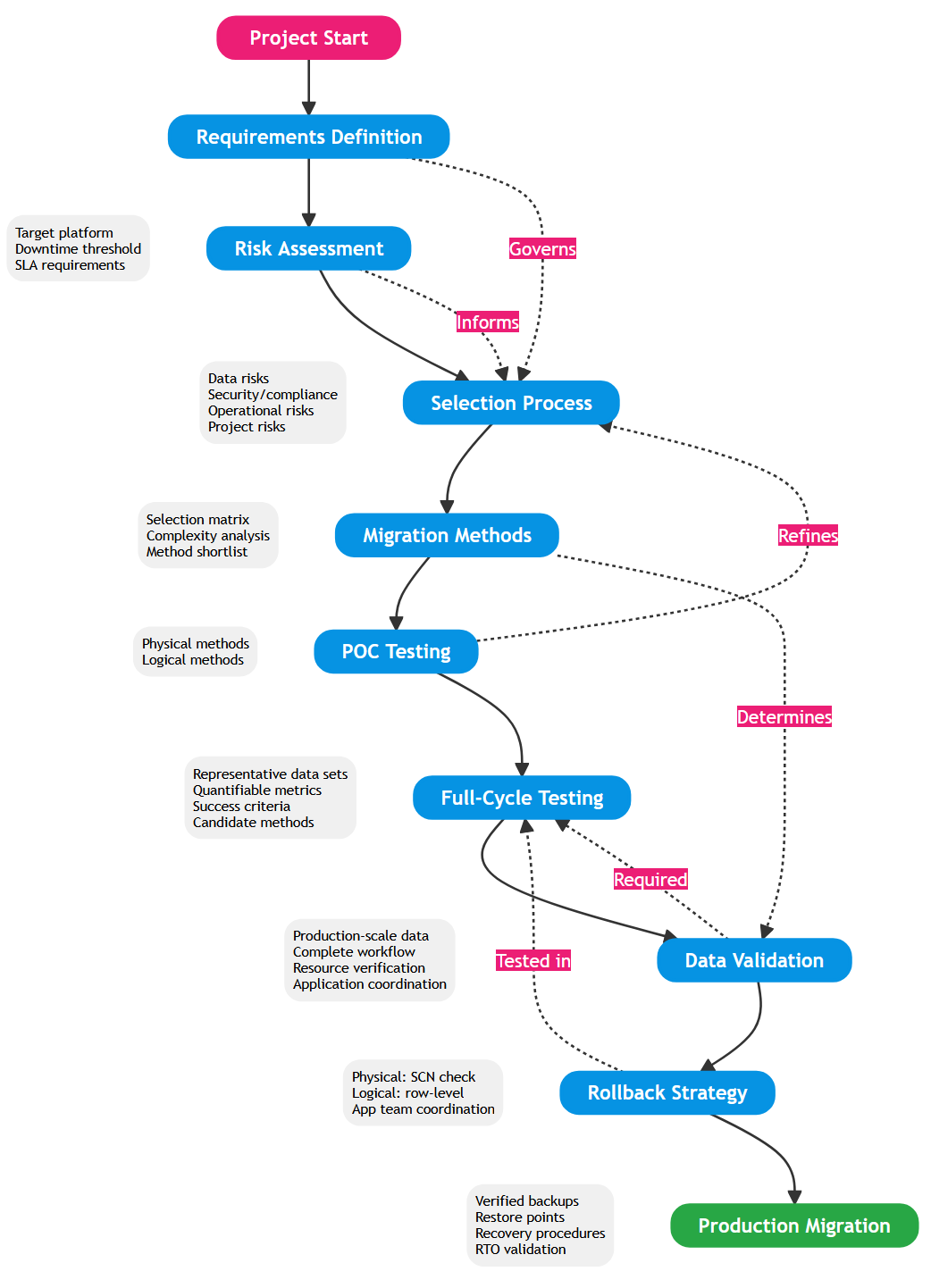

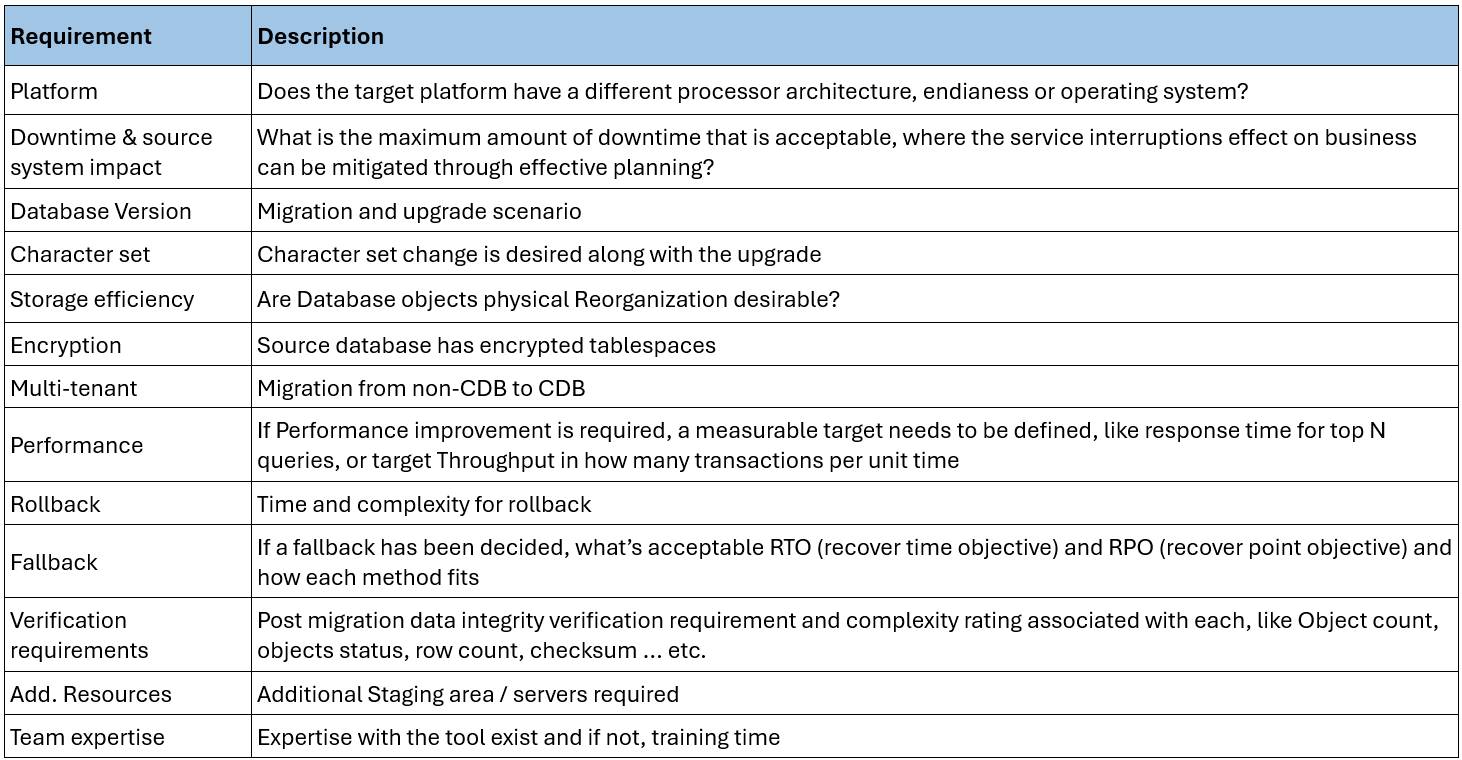

Early project preparation starts with clear requirements definition, which governs the selection criteria for the migration approach. In practice, defining realistic requirements can be challenging. Table 1-1 below offers an example for environment-specific requirements, which affects migration methods evaluation. Two non-negotiable requirements should be clear at project start for the early migration methods evaluation round. The first is target database platform and the second is tolerable downtime during live migration. A well-justified minimum threshold for downtime effectively filters unsuitable methods. For example, a stringent downtime requirement disqualifies simple physical RMAN Restore, necessitating sophisticated approaches with longer implementation timelines such as zero-downtime or replication approaches. The platform requirement is tightly related to the dimensioning task performed in the very early stages of the project, a topic beyond this discussion’s scope.

Risk Assessment

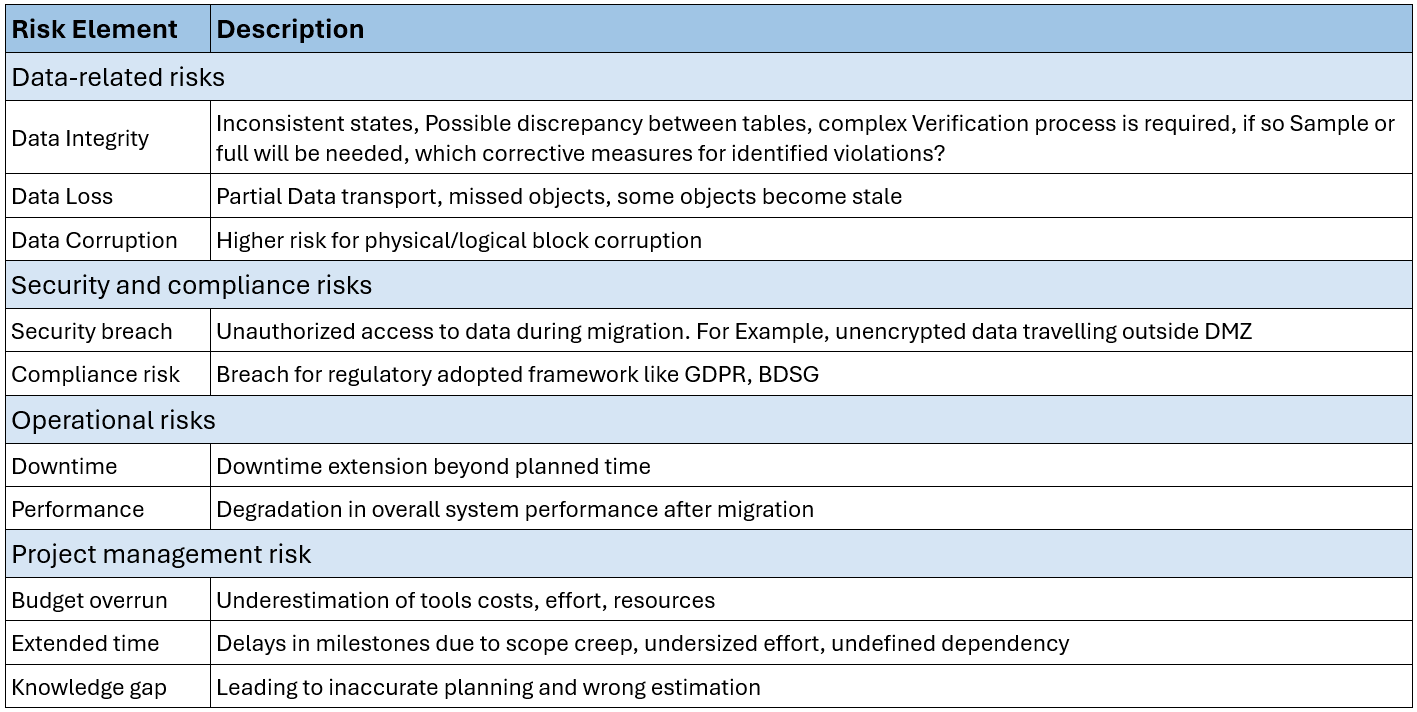

The criticality of database migration is self-evident. This elevates the importance of rigorous risk evaluation. Comprehensive understanding of source environment hardware and target version capabilities is essential for effective risk assessment. Thorough stakeholder discussions help assign realistic weights to risk factors. Some organizations adopt a more risk-averse approach. Challenging internal discussions are key to assigning accurate ratings for risk elements. This approach avoids costly decisions based on inflated risk assessment. Table 1-2 demonstrates and example of key risk elements.

Once both requirements have been clearly identified, a preliminary selection matrix can be established. This matrix can also consider other risk factors, like complexity, estimated effort , planned testing time, rollback and fallback scenario ….etc. It’s important to consider that the matrix at this early stage in the project holds initial rating for many dimensions. Complexity is difficult to measure accurately because each environment differs, and migration methods yield varying scores depending on data size, object count, object types, and database features. This matrix role in this phase is to produce a short-list of the migration methods, eligible for the next evaluation phase. Methods in this list will be used in the Next proof-of-concept (POC) test, which can be used by a smaller data subset. Goal of this phase is to reach a verifiable score for each method, giving a better input for the evaluation matrix like complexity, fallback scenario. The matrix yields a shortlist of – eligible methods. Critically define the precise Success Criteria for the upcoming POC phase before testing begins (e.g., “POC is successful if data transfer rate exceeds xx MB/s”). POC phase concludes with a more solid evaluation, leading to of a shortlist of 2-3 methods, eligible for a full cycle testing.

Selection Process

With requirements defined, a preliminary selection matrix can be established. This matrix should also consider risk factors including complexity, estimated effort, planned testing time, and rollback scenarios. At this stage, the matrix contains preliminary ratings for elements included in the selection matrix. Complexity is difficult to measure accurately because each environment differs, and migration methods yield varying scores depending on data size, object count, object types, and database features. The matrix’s purpose is to generate a shortlist of methods for POC testing.

These methods proceed to POC using representative data subsets. The goal of this phase is to establish quantifiable score for each method on the selection matrix elements, such as actual complexity and fallback scenario viability. Only after testing can real-world evaluation be conducted, accounting for environment-specific and release-specific challenges, leading to informed selection decisions.

A more elaborate discussion about migration method selection criteria can be found here.

Database Migration Methods overview

Migration methods divide into two categories: logical and physical, distinguished by the object type transferred during migration. Physical methods operate on lower-level constructs from a data dictionary perspective, where data is transported at the datafile or datablock level. For example, RMAN belongs to this category. Logical methods move data at the database object level such as tables and partitions. Therefore, the source database must be accessible for logical methods. Data Pump and Golden Gate are examples of such methods. This distinction has practical implications. For example, Logical methods are more demanding during post-migration Validation. More extensive discussion about pros and cons of the migration methods in Migration methods article.

Testing

Testing importance can’t be stress enough. It facilitates accurate time planning at early phases and safeguards unpleasant surprises during execution. Testing follows a two-phase approach.

Phase 1: Proof of Concept (POC): POC testing identifies shortlisted methods for inclusion in the evaluation matrix. The goal is to establish accurate ratings for evaluation dimensions such as complexity, performance, and resource requirements. POC need not replicate production data volume at 1:1 scale, but must include representative samples covering key data volumes, object types, and database features. This phase produces quantifiable metrics that refine preliminary assessments and eliminate unsuitable methods.

Phase 2: Full-Cycle Testing Full testing uses production-scale data and validates the complete migration workflow. Multiple test iterations are advisable until the migration process executes error-free and resource requirements are precisely quantified. Coordinate verification methods with application teams in advance, as different migration approaches – particularly logical methods – impose stricter data integrity validation requirements. This phase serves as final validation before production migration, confirming that success criteria are achievable under realistic conditions.

Data Validation

Validation requirements correlate directly with the selected migration method. Physical methods impose simpler object-level validation demands. Once the target database reaches the same SCN as the source, no logical validation is theoretically required – the physical consistency guarantees data integrity.

Logical methods demand more rigorous verification. Methods like GoldenGate require row-level validation to confirm data accuracy and completeness across all migrated objects. This validation complexity increases with database size and schema complexity.

Early coordination with application development teams optimizes the validation approach. In many cases, existing application-level verification processes can be adapted for migration validation, reducing implementation effort. Additionally, certain object categories—such as temporary tables, staging tables, or transient data structures—may be excluded from validation scope altogether, streamlining the overall process without compromising data integrity.

Define validation scope and methodology during the planning phase, not during testing. This ensures adequate time allocation and prevents schedule delays from unanticipated validation requirements.