Types of Migration Methods

Migration methods can be categorized based on the smallest unit processed by the migration utility. This leads to four groups: physical, logical, hybrid and data replication.

Physical migration approach

Physical migration methods operate by transferring database files and their underlying data blocks directly from source to target. Their primary appeal lies in simplicity — both in execution and in post-migration verification. Because the database is moved as a complete set of files rather than as individual objects, the risk of data inconsistencies is substantially lower. This advantage is further reinforced when the migration set includes dictionary files, which carry the database’s structural definitions. Common physical migration methods include RMAN backup-based migration, Pluggable Database relocation, and Physical Standby conversion. Verifying a successful migration is straightforward, provided no the object-level changes had been performed on the migrated target database, such as recompilation or index rebuilds.

Logical migration approach

Logical migration methods, by contrast, operate at the object level — meaning the unit of migration is the individual database object, whether a table, index, stored procedure, or otherwise. Objects that contain no actual data records, such as views, scheduled jobs, and indexes, are migrated exclusively through their DDL definitions rather than their physical storage. Data Pump is the industry standard for logical migrations. The original Export/Import utilities are now obsolete and no longer recommended for general use since 11g, yet have been retained in subsequent releases to support importing legacy dump files.

Hybrid migration tools

Transportable migration methods lend themselves to both logical and physical approaches, which is why they are more accurately placed in a hybrid category of their own. Transportable is a mature technology, initially released in Oracle 8i, with Oracle 10g marking a significant advancement by introducing cross-platform transportable functionality. By transportable technology we refer to two distinct methods: Full Transportable Export/Import (FTEX) and Transportable Tablespaces (TTS). The TTS variant used for cross-platform migration is commonly referred to as Cross-Platform Transportable Tablespaces (XTTS). Both FTEX and XTTS are discussed extensively in their respective articles. The majority of application data in transportable methods is migrated by reusing the source database datafiles directly — the physical component — while the metadata and structural definitions held in the data dictionary are transferred through Data Pump.

Data Replication

The fourth group is data replication solutions. Their principal advantage is the minimal downtime requirement, often marketed as “zero-downtime” migrations, though achieving that service level across the entire application stack demands careful planning and configuration across multiple layers, not only at the database level. A secondary benefit is the extended verification window: with source and target systems running concurrently, validation can proceed without time pressure. However, replication methods impose significantly more complex verification requirements than the other groups. Consider a single replicated table. Comparing row counts between source and target may seem sufficient and indeed enough for methods like Data Pump. Yet row counts do not capture row-level updates. For example, a DML UPDATE operation on the source that fails to replicate will leave row counts identical despite a data mismatch. This additional verification requirement does not apply to Data Pump, since export and import are performed during the cutoff period. By comparison, physical migration methods such as RMAN are far simpler in this regard, since consistency verification is performed at the datafile level.

Data replication solutions come with their own additional challenges like setup and configuration overhead for the replication tool, and in most cases modifications to the database model itself. For example, modifications such as addition of unique integrity constraints. This level of structural change necessitates direct engagement from application architects. Therefore, migration through replication tools should account for the additional time that their higher complexity demands.

There’s many solution in market for data replication. GoldenGate is Oracle’s replication solution. Third-party alternatives include Quest SharePlex and Qlik Replicate among others. Oracle’s Logical Standby database, while not a replication solution in the strict technical sense, shares enough operational and configuration characteristics with this group to warrant its inclusion in this group.

This discussion of replication solutions aims to highlight the importance of precise requirement definition. Requirement definition is discussed in more details here. Downtime requirement should be carefully evaluated with service owners, highlighting the associated costs (training, configuration, testing time and licensing).

Considering the categorization criteria discussed above, commonly used migration tools can be summarized as:

(1) Physical methods

- RMAN Backup & Restore (same or cross-platform)

- Pluggable Databases

- Physical standby Database

(2) Logical methods

- Data Pump (Dump files / Network)

(3) Hybrid methods (Transportable)

- Full Database Transportable (FTEX)

- Transportable Tablespaces (TTS)

- Transportable + Inc. Backup

(4) Data Replication

- Replication solutions (GoldenGate, Qlik, sharePlex, ..)

- Transient Logical standby database

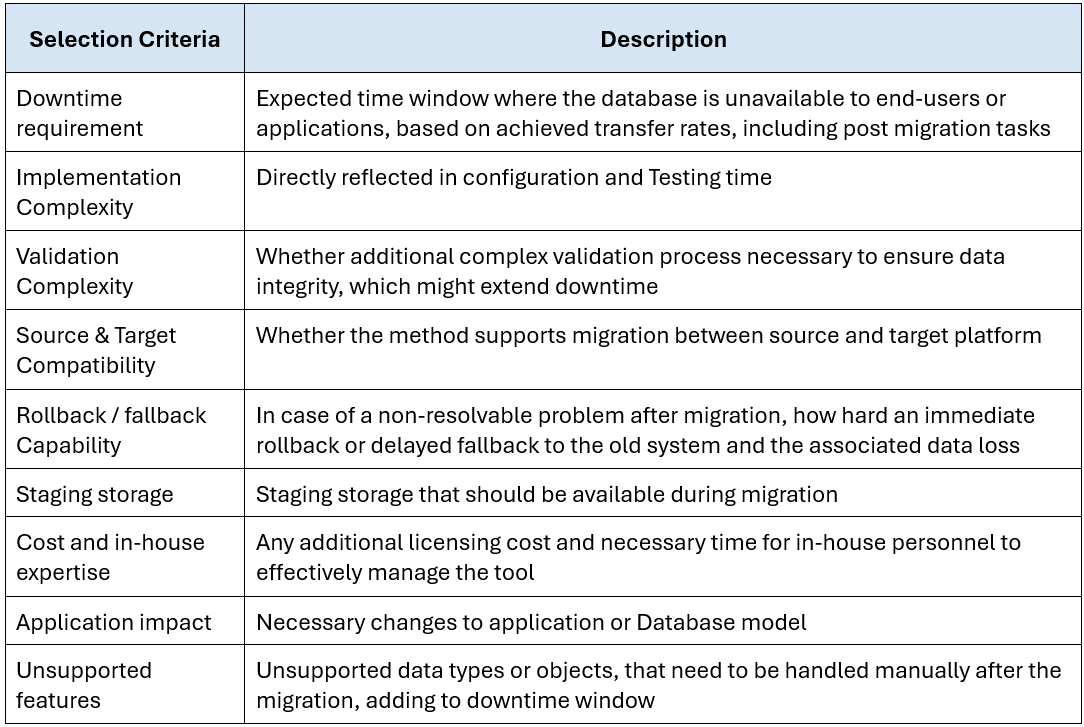

Selection criteria

Choosing the right migration method is a multi-factor decision that should follow a structured evaluation, which considers environment-specific requirements and team capabilities. Two of the most important factors driving this decision are the acceptable downtime requirement and overall method complexity, both of which directly affect project timeline. An effective way to navigate this decision is by utilizing a migration selection matrix, where different selection factors are weighed and scored against available migration methods. While some elements, such as licensing costs, are straightforward to quantify, other factors – such as actual complexity and data transfer rates – are environment-dependent and often require a Proof of Concept (POC) to produce a realistic evaluation.

The following section discusses three of the most considered selection factors for that decision.

Downtime requirement

Physical migration approaches generally achieve higher data transfer rates for large databases, especially for databases with large object count. One reason is that some of these methods bypass database memory structure, others make use of dedicated areas of SGA for efficient data copy. This lower-level physical access level achieves less migration time in the data transfer phase, especially for large data volume. Another mechanism that contributes to the inferior performance of data pump is object dependency resolution. Since Data pump works at object level, all objects upon which a given object depends must be loaded before the object itself. This is managed by the master coordinator and loading parallelization is leveraged between slave workers. However achieving a 100% utilization for all slave workers is almost impossible. Physical migration methods conversely do not suffer this problem and can achieve far better resource utilization through a more effective parallelism.

Complexity

Logical migration through Oracle Data Pump processes individual database objects like tables, LOBs, scheduler jobs, and other object types individually. This object-level granularity poses higher risk for missing and invalid objects due to failure during loading and absence of dependent objects. Physical migration approaches are praised in this area, especially RMAN where the whole dictionary is migrated block by block. However, hybrid methods like transportable, where logical objects (views, procedures, etc. ) are loaded from dump file also carry this risk. Conducting comprehensive pre-migration testing mitigates those risks. Each application is unique in the type of objects used to enforce application logic and also the database features used. Therefore, a full size Database test is highly recommended. Otherwise, post-migration object-level problems will extend verification time and subsequently push back go-live schedule. Physical migration holds an edge due to lower possibility of object-level failures, which translates to reduced testing overhead and shorter project timelines.

On the other end the scale stand replication as the most demanding migration method with regard to planning, configuration, testing and troubleshooting. One important point needs to be considered while evaluating complexity for replication is row uniqueness requirements as published here for golden gate. In simple terms, each table needs to have unique key identifier (PK / U) to uniquely identify rows for DML (delete, update) operations in the target database. Ideally, a physical key implemented through PK or unique secondary index. alternatively, Many replication solutions offer configuration options at the table-level, where row uniqueness can be defined at specific set of columns. Time and Effort for creating these indexes or configuring the replication solution need to be carefully planned, and the associated collaboration with other stake holders (Ex: App. architecture). Another challenging task which must be planned appropriately while going for replication is the data verification phase. Here verification should be performed at the row level. Comparing row counts on both systems is not enough, since updates for tables with the exact number of records is not verified when using row count as verification method. More rigorous approaches include in-house row hashing or dedicated verification tools such as Oracle Veridata, Qlik, or SharePlex.

Performance considerations

A reasonable expectation is that all methods should deliver equivalent database performance after migration. This is not the case. Data pump offers a unique benefit compared to all other physical migration methods. The latter replicate tables and index physical block structure as is to the new system. Data Pump on the other hand, loads table records into new physical structure (segment, extents), which resolves existing row-chaining in the source database. Therefore, table size and overall records organization is optimized. Storage optimizations also extend to indexes, due to Index being rebuilt in the new system. Improvement is reflected in optimized I/O through fewer block reads in buffer cache. This optimization is limited only for fragmented table and indexes, where high DML operation (update, delete) are performed. In data warehouse database, where data is loaded on batched and later used for reporting, with minimal subsequent DML. No big enhancement is expected for such non-fragmented data. To sum up, a full reorganization of the database is implicitly performed with logical migration. For Physical migrations, this task can be planned as post migration task in maintenance windows if proven as valuable.

A non-exhaustive list for elements that needs to be evaluated and included in selection matrix is listed in Table 2-1 below. Some of these elements can only be properly weighted after a testing. Moreover, Risks associated with each method also need to be considered during evaluation. Risk elements are briefly discussed here.

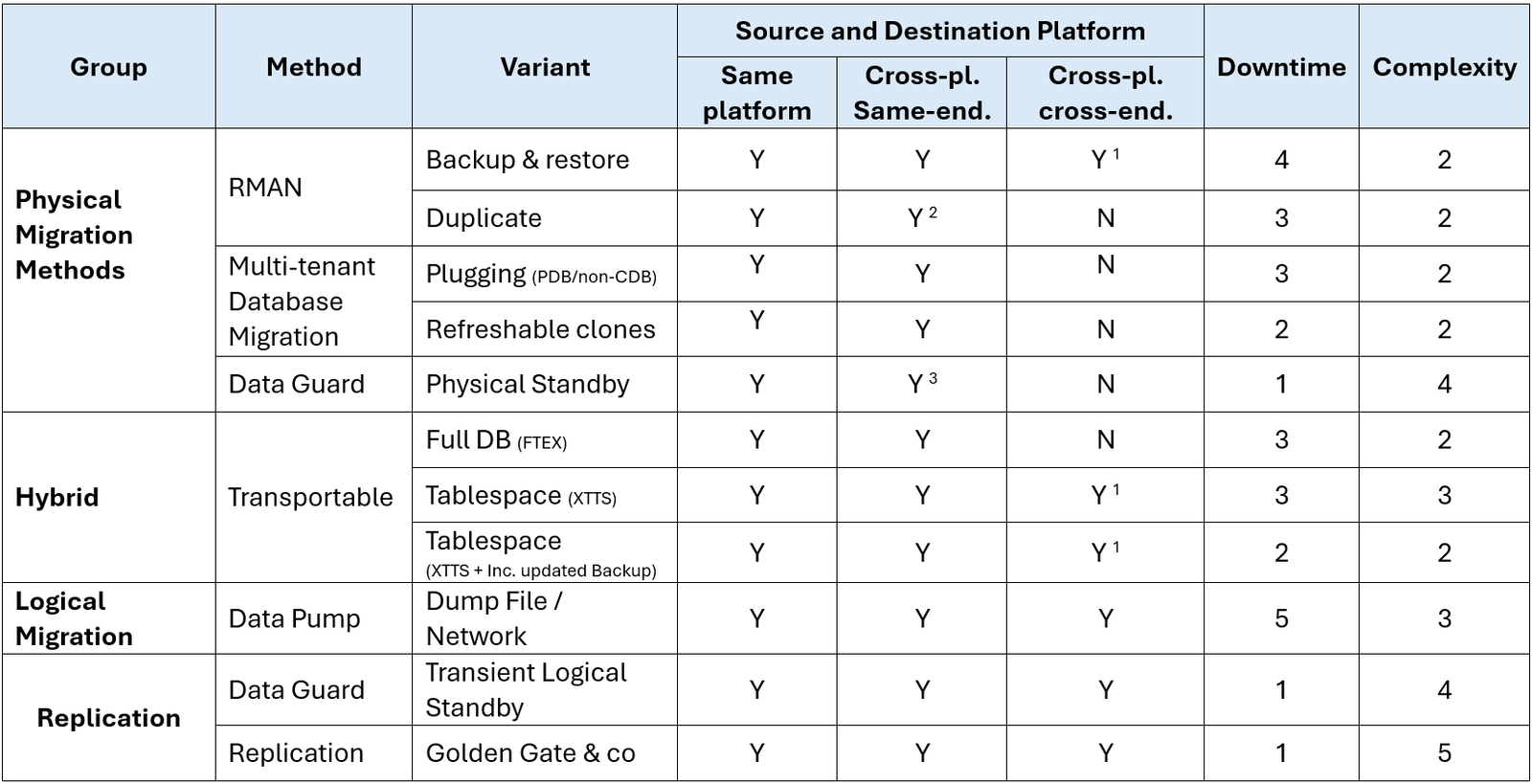

High level comparison

The following table lists migration methods and their indicative ratings across three of the selection criteria discussed above. The first is source and destination platform and whether the method support cross-platform migration and cross endianness. Second is Downtime requirement during migration, where 1 is lowest. Lastly, complexity for the whole project, where 1 is the simplest to implement (Planning, time, effort). Ratings can be considered as bands rather than scores on a scale. Beyond my own professional experience, the migration process is very specific to each environment. Therefore, the ratings below represents suggested bands at the time of writing, rather than a generalized weights.

(1) Data file conversion is necessary

(2) Restriction on which cross-platform are supported (Doc ID 1079563.1))

(3) What differences are allowed between a Primary Database and a Data Guard Physical Standby Database (Doc ID 413484.1)